Fashion Mnist Push Accuracy Higher Than 50 Percent

Deep Learning CNN for Fashion-MNIST Clothing Classification

Last Updated on August 28, 2020

The Style-MNIST clothing classification problem is a new standard dataset used in figurer vision and deep learning.

Although the dataset is relatively unproblematic, information technology can be used as the basis for learning and practicing how to develop, evaluate, and apply deep convolutional neural networks for image classification from scratch. This includes how to develop a robust test harness for estimating the performance of the model, how to explore improvements to the model, and how to save the model and later load information technology to make predictions on new information.

In this tutorial, you lot will detect how to develop a convolutional neural network for clothing classification from scratch.

Later completing this tutorial, you will know:

- How to develop a test harness to develop a robust evaluation of a model and establish a baseline of functioning for a classification job.

- How to explore extensions to a baseline model to improve learning and model capacity.

- How to develop a finalized model, evaluate the operation of the final model, and use information technology to make predictions on new images.

Kick-start your project with my new book Deep Learning for Computer Vision, including step-past-pace tutorials and the Python source code files for all examples.

Let's get started.

- Update Jun/2019: Fixed minor issues where the model was divers outside of the CV loop. Updated results (thanks Aditya).

- Updated Oct/2019: Updated for Keras 2.3 and TensorFlow two.0.

How to Develop a Deep Convolutional Neural Network From Scratch for Fashion MNIST Clothing Classification

Photo by Zdrovit Skurcz, some rights reserved.

Tutorial Overview

This tutorial is divided into five parts; they are:

- Way MNIST Habiliment Classification

- Model Evaluation Methodology

- How to Develop a Baseline Model

- How to Develop an Improved Model

- How to Finalize the Model and Brand Predictions

Desire Results with Deep Learning for Computer Vision?

Take my free 7-day email crash class at present (with sample code).

Click to sign-up and also get a complimentary PDF Ebook version of the course.

Fashion MNIST Clothing Classification

The Fashion-MNIST dataset is proposed as a more challenging replacement dataset for the MNIST dataset.

It is a dataset comprised of lx,000 small square 28×28 pixel grayscale images of items of x types of habiliment, such as shoes, t-shirts, dresses, and more. The mapping of all 0-nine integers to class labels is listed beneath.

- 0: T-shirt/top

- 1: Trouser

- two: Pullover

- 3: Dress

- 4: Coat

- v: Sandal

- 6: Shirt

- 7: Sneaker

- 8: Purse

- 9: Ankle kicking

It is a more challenging classification problem than MNIST and tiptop results are achieved past deep learning convolutional neural networks with a classification accuracy of about 90% to 95% on the hold out test dataset.

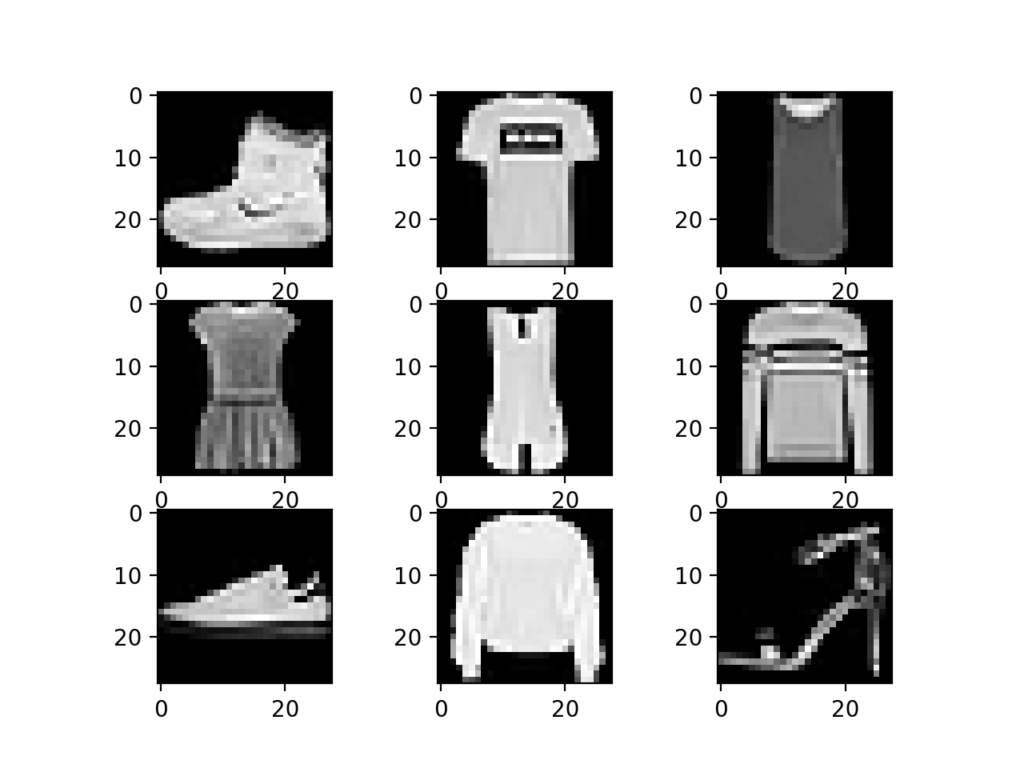

The instance beneath loads the Fashion-MNIST dataset using the Keras API and creates a plot of the get-go nine images in the training dataset.

| # example of loading the fashion mnist dataset from matplotlib import pyplot from keras . datasets import fashion _mnist # load dataset ( trainX , trainy ) , ( testX , testy ) = fashion_mnist . load_data ( ) # summarize loaded dataset print ( 'Train: Ten=%s, y=%s' % ( trainX . shape , trainy . shape ) ) print ( 'Exam: X=%due south, y=%due south' % ( testX . shape , testy . shape ) ) # plot start few images for i in range ( 9 ) : # define subplot pyplot . subplot ( 330 + 1 + i ) # plot raw pixel data pyplot . imshow ( trainX [ i ] , cmap = pyplot . get_cmap ( 'gray' ) ) # show the figure pyplot . prove ( ) |

Running the case loads the Fashion-MNIST train and exam dataset and prints their shape.

We can see that there are threescore,000 examples in the training dataset and 10,000 in the test dataset and that images are indeed square with 28×28 pixels.

| Train: X=(60000, 28, 28), y=(60000,) Test: X=(10000, 28, 28), y=(10000,) |

A plot of the commencement nine images in the dataset is also created showing that indeed the images are grayscale photographs of items of article of clothing.

Plot of a Subset of Images From the Fashion-MNIST Dataset

Model Evaluation Methodology

The Fashion MNIST dataset was developed as a response to the wide use of the MNIST dataset, that has been effectively "solved" given the use of modern convolutional neural networks.

Fashion-MNIST was proposed to be a replacement for MNIST, and although information technology has not been solved, it is possible to routinely achieve error rates of 10% or less. Like MNIST, it can be a useful starting betoken for developing and practicing a methodology for solving paradigm classification using convolutional neural networks.

Instead of reviewing the literature on well-performing models on the dataset, we can develop a new model from scratch.

The dataset already has a well-defined railroad train and exam dataset that we tin can use.

In order to estimate the functioning of a model for a given training run, we can farther separate the preparation set into a train and validation dataset. Performance on the train and validation dataset over each run tin and then be plotted to provide learning curves and insight into how well a model is learning the trouble.

The Keras API supports this by specifying the "validation_data" argument to the model.fit() function when grooming the model, that volition, in turn, return an object that describes model operation for the chosen loss and metrics on each training epoch.

| # record model operation on a validation dataset during training history = model . fit ( . . . , validation_data = ( valX , valY ) ) |

In order to estimate the performance of a model on the trouble in general, we tin can use k-fold cantankerous-validation, possibly 5-fold cantankerous-validation. This volition give some account of the model's variance with both respect to differences in the training and test datasets and the stochastic nature of the learning algorithm. The operation of a model can exist taken as the mean performance across m-folds, given with the standard difference, that could be used to approximate a confidence interval if desired.

We tin use the KFold course from the scikit-learn API to implement the k-fold cross-validation evaluation of a given neural network model. There are many ways to achieve this, although we tin cull a flexible approach where the KFold is only used to specify the row indexes used for each split.

| # case of one thousand-fold cv for a neural net information = . . . # prepare cantankerous validation kfold = KFold ( v , shuffle = True , random_state = 1 ) # enumerate splits for train_ix , test_ix in kfold . split ( information ) : model = . . . . . . |

We volition concur dorsum the actual exam dataset and use it equally an evaluation of our final model.

How to Develop a Baseline Model

The first step is to develop a baseline model.

This is critical as it both involves developing the infrastructure for the test harness so that any model we pattern can be evaluated on the dataset, and information technology establishes a baseline in model performance on the problem, by which all improvements can be compared.

The blueprint of the test harness is modular, and we tin can develop a carve up function for each piece. This allows a given aspect of the test harness to be modified or inter-changed, if we want, separately from the rest.

Nosotros tin can develop this test harness with five key elements. They are the loading of the dataset, the preparation of the dataset, the definition of the model, the evaluation of the model, and the presentation of results.

Load Dataset

We know some things about the dataset.

For instance, we know that the images are all pre-segmented (east.g. each image contains a single item of wear), that the images all accept the same square size of 28×28 pixels, and that the images are grayscale. Therefore, we can load the images and reshape the data arrays to have a single color channel.

| # load dataset ( trainX , trainY ) , ( testX , testY ) = fashion_mnist . load_data ( ) # reshape dataset to accept a single aqueduct trainX = trainX . reshape ( ( trainX . shape [ 0 ] , 28 , 28 , 1 ) ) testX = testX . reshape ( ( testX . shape [ 0 ] , 28 , 28 , 1 ) ) |

Nosotros also know that there are ten classes and that classes are represented as unique integers.

We can, therefore, utilise a one hot encoding for the course chemical element of each sample, transforming the integer into a ten element binary vector with a 1 for the index of the course value. Nosotros tin can achieve this with the to_categorical() utility office.

| # i hot encode target values trainY = to_categorical ( trainY ) testY = to_categorical ( testY ) |

The load_dataset() office implements these behaviors and can exist used to load the dataset.

| # load train and test dataset def load_dataset ( ) : # load dataset ( trainX , trainY ) , ( testX , testY ) = fashion_mnist . load_data ( ) # reshape dataset to have a single channel trainX = trainX . reshape ( ( trainX . shape [ 0 ] , 28 , 28 , 1 ) ) testX = testX . reshape ( ( testX . shape [ 0 ] , 28 , 28 , 1 ) ) # one hot encode target values trainY = to_categorical ( trainY ) testY = to_categorical ( testY ) return trainX , trainY , testX , testY |

Prepare Pixel Data

We know that the pixel values for each image in the dataset are unsigned integers in the range betwixt blackness and white, or 0 and 255.

We do not know the best manner to scale the pixel values for modeling, but we know that some scaling volition be required.

A adept starting indicate is to normalize the pixel values of grayscale images, due east.grand. rescale them to the range [0,1]. This involves first converting the data type from unsigned integers to floats, so dividing the pixel values by the maximum value.

| # convert from integers to floats train_norm = train . astype ( 'float32' ) test_norm = test . astype ( 'float32' ) # normalize to range 0-i train_norm = train_norm / 255.0 test_norm = test_norm / 255.0 |

The prep_pixels() function beneath implement these behaviors and is provided with the pixel values for both the railroad train and test datasets that will need to be scaled.

| # scale pixels def prep_pixels ( railroad train , test ) : # convert from integers to floats train_norm = railroad train . astype ( 'float32' ) test_norm = exam . astype ( 'float32' ) # normalize to range 0-1 train_norm = train_norm / 255.0 test_norm = test_norm / 255.0 # render normalized images return train_norm , test_norm |

This function must exist called to set up the pixel values prior to any modeling.

Ascertain Model

Side by side, we demand to define a baseline convolutional neural network model for the problem.

The model has two master aspects: the characteristic extraction forepart comprised of convolutional and pooling layers, and the classifier backend that will make a prediction.

For the convolutional forepart-cease, we can get-go with a single convolutional layer with a modest filter size (3,3) and a modest number of filters (32) followed by a max pooling layer. The filter maps can and then be flattened to provide features to the classifier.

Given that the trouble is a multi-class classification, we know that we will require an output layer with 10 nodes in society to predict the probability distribution of an epitome belonging to each of the 10 classes. This will also require the employ of a softmax activation role. Between the feature extractor and the output layer, nosotros can add a dense layer to translate the features, in this example with 100 nodes.

All layers will apply the ReLU activation function and the He weight initialization scheme, both best practices.

We volition use a conservative configuration for the stochastic gradient descent optimizer with a learning rate of 0.01 and a momentum of 0.nine. The chiselled cantankerous-entropy loss role will be optimized, suitable for multi-course nomenclature, and nosotros will monitor the nomenclature accurateness metric, which is appropriate given we accept the same number of examples in each of the 10 classes.

The define_model() function beneath volition define and return this model.

| # define cnn model def define_model ( ) : model = Sequential ( ) model . add ( Conv2D ( 32 , ( three , 3 ) , activation = 'relu' , kernel_initializer = 'he_uniform' , input_shape = ( 28 , 28 , one ) ) ) model . add together ( MaxPooling2D ( ( 2 , 2 ) ) ) model . add ( Flatten ( ) ) model . add ( Dense ( 100 , activation = 'relu' , kernel_initializer = 'he_uniform' ) ) model . add ( Dense ( 10 , activation = 'softmax' ) ) # compile model opt = SGD ( lr = 0.01 , momentum = 0.9 ) model . compile ( optimizer = opt , loss = 'categorical_crossentropy' , metrics = [ 'accurateness' ] ) render model |

Evaluate Model

Afterwards the model is defined, nosotros need to evaluate information technology.

The model will be evaluated using five-fold cantankerous-validation. The value of k=5 was chosen to provide a baseline for both repeated evaluation and to not be too large as to require a long running time. Each examination set will be 20% of the grooming dataset, or virtually 12,000 examples, close to the size of the bodily exam set for this trouble.

The preparation dataset is shuffled prior to being split and the sample shuffling is performed each time and so that whatever model we evaluate will have the aforementioned train and exam datasets in each fold, providing an apples-to-apples comparison.

Nosotros will railroad train the baseline model for a minor 10 training epochs with a default batch size of 32 examples. The test set for each fold will exist used to evaluate the model both during each epoch of the preparation run, then we can later create learning curves, and at the terminate of the run, so we tin can estimate the performance of the model. As such, we volition keep track of the resulting history from each run, as well as the classification accuracy of the fold.

The evaluate_model() function below implements these behaviors, taking the training dataset as arguments and returning a list of accuracy scores and training histories that can exist later summarized.

| ane 2 3 four five 6 seven 8 9 10 xi 12 thirteen 14 xv sixteen 17 xviii xix xx | # evaluate a model using g-fold cantankerous-validation def evaluate_model ( dataX , dataY , n_folds = 5 ) : scores , histories = list ( ) , listing ( ) # prepare cross validation kfold = KFold ( n_folds , shuffle = True , random_state = ane ) # enumerate splits for train_ix , test_ix in kfold . split up ( dataX ) : # define model model = define_model ( ) # select rows for railroad train and test trainX , trainY , testX , testY = dataX [ train_ix ] , dataY [ train_ix ] , dataX [ test_ix ] , dataY [ test_ix ] # fit model history = model . fit ( trainX , trainY , epochs = 10 , batch_size = 32 , validation_data = ( testX , testY ) , verbose = 0 ) # evaluate model _ , acc = model . evaluate ( testX , testY , verbose = 0 ) print ( '> %.3f' % ( acc * 100.0 ) ) # suspend scores scores . suspend ( acc ) histories . append ( history ) return scores , histories |

Present Results

One time the model has been evaluated, we tin can present the results.

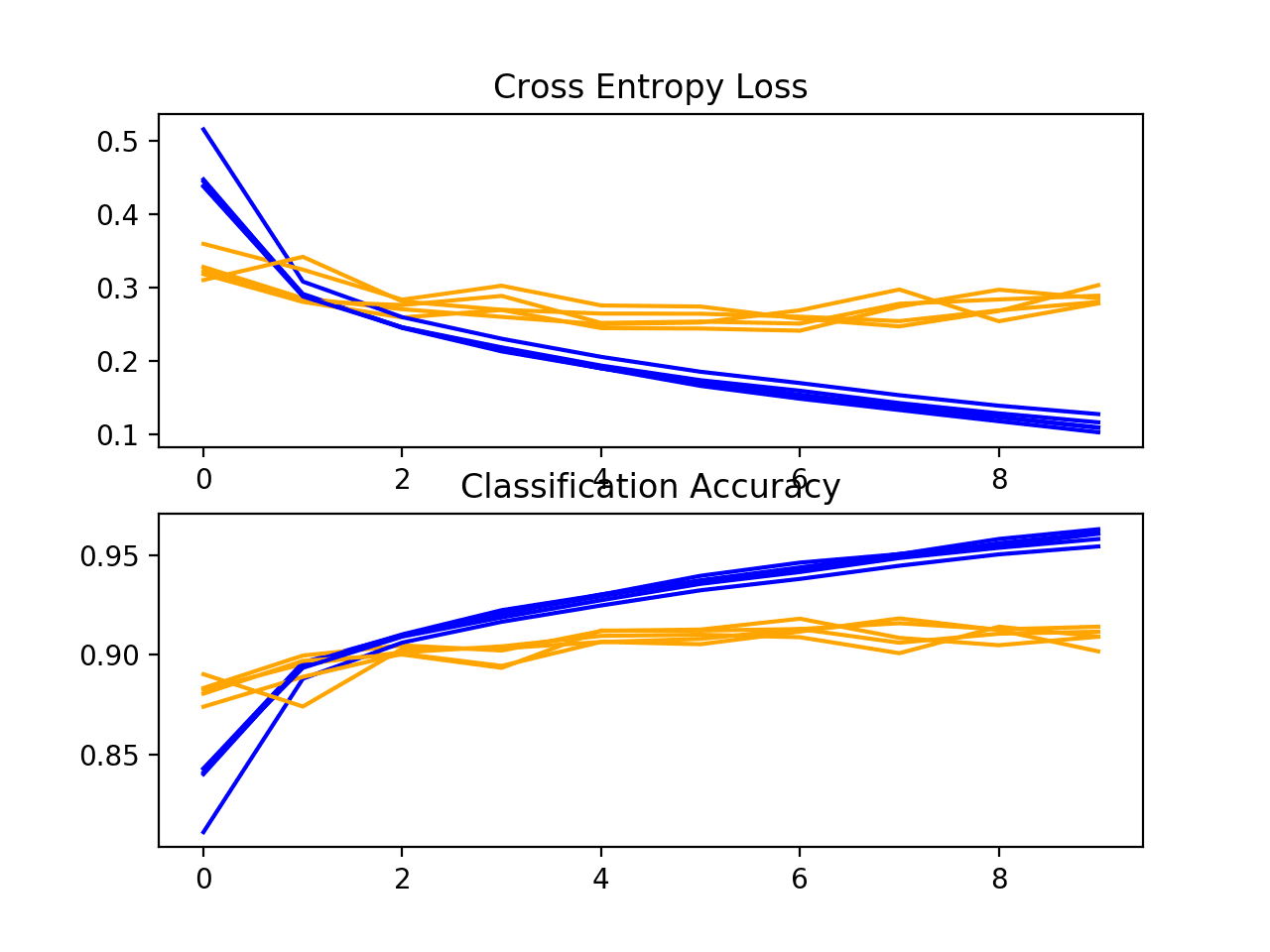

There are 2 key aspects to present: the diagnostics of the learning behavior of the model during training and the estimation of the model performance. These can be implemented using dissever functions.

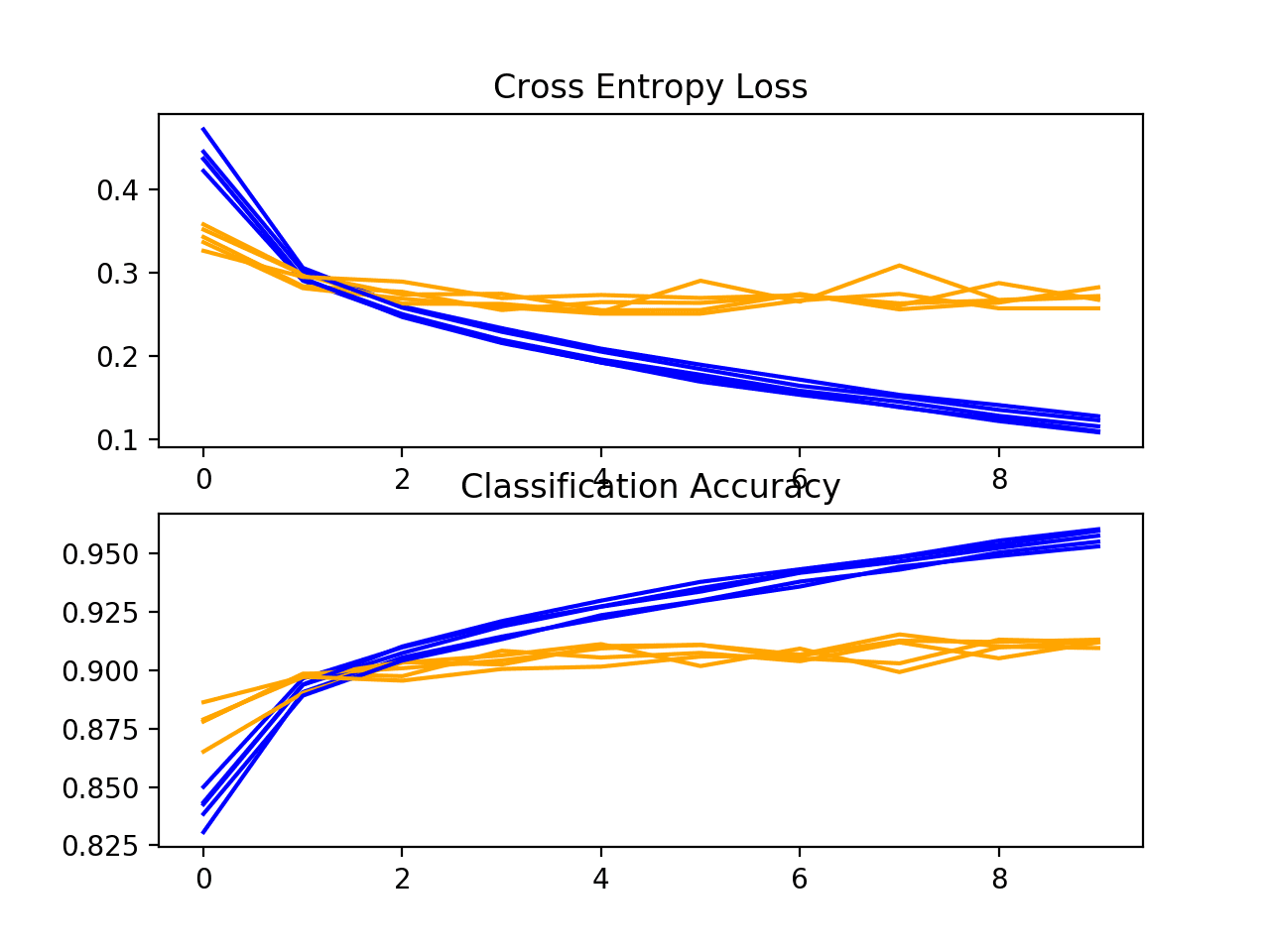

Commencement, the diagnostics involve creating a line plot showing model performance on the train and examination fix during each fold of the k-fold cantankerous-validation. These plots are valuable for getting an idea of whether a model is overfitting, underfitting, or has a good fit for the dataset.

Nosotros will create a single figure with two subplots, one for loss and one for accuracy. Blue lines will signal model performance on the training dataset and orangish lines will indicate operation on the hold out test dataset. The summarize_diagnostics() office below creates and shows this plot given the collected preparation histories.

| # plot diagnostic learning curves def summarize_diagnostics ( histories ) : for i in range ( len ( histories ) ) : # plot loss pyplot . subplot ( 211 ) pyplot . title ( 'Cross Entropy Loss' ) pyplot . plot ( histories [ i ] . history [ 'loss' ] , color = 'bluish' , label = 'railroad train' ) pyplot . plot ( histories [ i ] . history [ 'val_loss' ] , color = 'orange' , characterization = 'test' ) # plot accuracy pyplot . subplot ( 212 ) pyplot . title ( 'Classification Accuracy' ) pyplot . plot ( histories [ i ] . history [ 'accuracy' ] , color = 'bluish' , label = 'train' ) pyplot . plot ( histories [ i ] . history [ 'val_accuracy' ] , color = 'orange' , label = 'examination' ) pyplot . show ( ) |

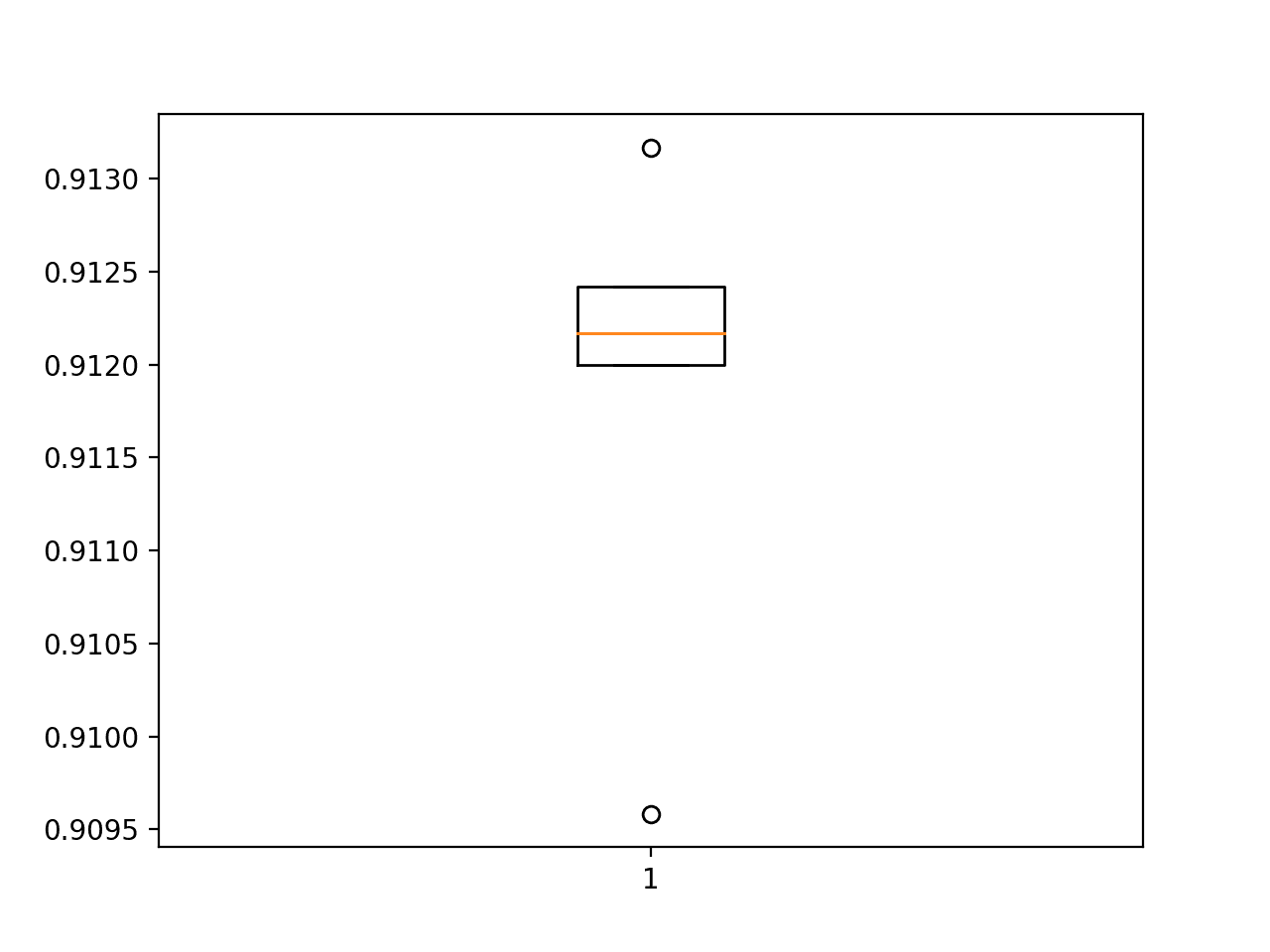

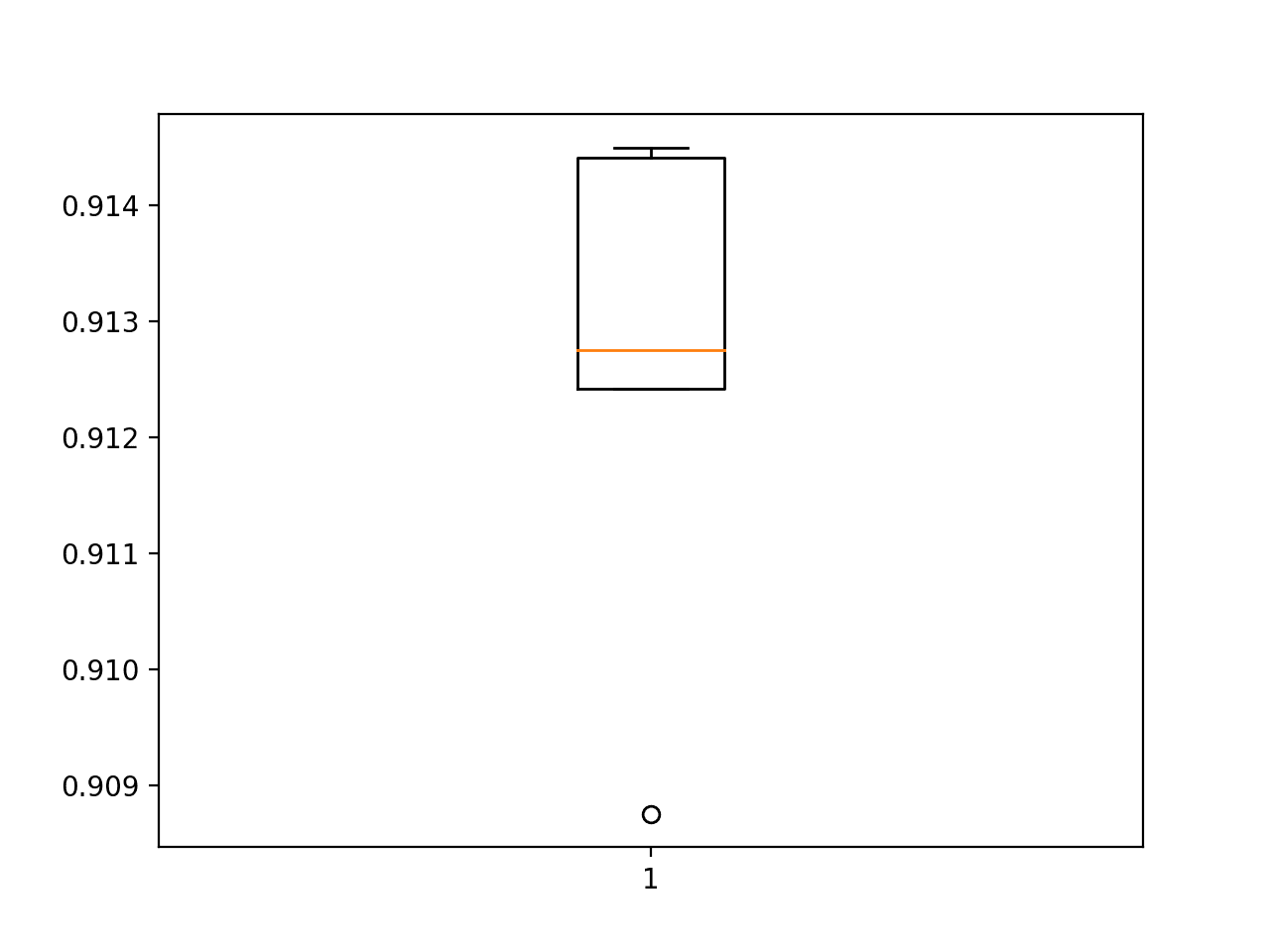

Next, the classification accuracy scores nerveless during each fold tin be summarized by calculating the mean and standard deviation. This provides an estimate of the average expected performance of the model trained on this dataset, with an judge of the average variance in the mean. We will likewise summarize the distribution of scores past creating and showing a box and whisker plot.

The summarize_performance() part below implements this for a given listing of scores nerveless during model evaluation.

| # summarize model operation def summarize_performance ( scores ) : # impress summary print ( 'Accuracy: mean=%.3f std=%.3f, n=%d' % ( mean ( scores ) * 100 , std ( scores ) * 100 , len ( scores ) ) ) # box and whisker plots of results pyplot . boxplot ( scores ) pyplot . show ( ) |

Complete Example

We need a part that will drive the test harness.

This involves calling all of the define functions.

| # run the test harness for evaluating a model def run_test_harness ( ) : # load dataset trainX , trainY , testX , testY = load_dataset ( ) # gear up pixel data trainX , testX = prep_pixels ( trainX , testX ) # evaluate model scores , histories = evaluate_model ( trainX , trainY ) # learning curves summarize_diagnostics ( histories ) # summarize estimated performance summarize_performance ( scores ) |

We at present accept everything we need; the complete code example for a baseline convolutional neural network model on the MNIST dataset is listed below.

| 1 2 three 4 5 6 7 8 ix x 11 12 13 xiv xv sixteen 17 18 nineteen 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 l 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 lxxx 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 | # baseline cnn model for way mnist from numpy import mean from numpy import std from matplotlib import pyplot from sklearn . model_selection import KFold from keras . datasets import fashion_mnist from keras . utils import to_categorical from keras . models import Sequential from keras . layers import Conv2D from keras . layers import MaxPooling2D from keras . layers import Dense from keras . layers import Flatten from keras . optimizers import SGD # load train and test dataset def load_dataset ( ) : # load dataset ( trainX , trainY ) , ( testX , testY ) = fashion_mnist . load_data ( ) # reshape dataset to have a single channel trainX = trainX . reshape ( ( trainX . shape [ 0 ] , 28 , 28 , 1 ) ) testX = testX . reshape ( ( testX . shape [ 0 ] , 28 , 28 , ane ) ) # i hot encode target values trainY = to_categorical ( trainY ) testY = to_categorical ( testY ) render trainX , trainY , testX , testY # scale pixels def prep_pixels ( train , test ) : # convert from integers to floats train_norm = train . astype ( 'float32' ) test_norm = test . astype ( 'float32' ) # normalize to range 0-1 train_norm = train_norm / 255.0 test_norm = test_norm / 255.0 # return normalized images return train_norm , test _norm # define cnn model def define_model ( ) : model = Sequential ( ) model . add ( Conv2D ( 32 , ( three , 3 ) , activation = 'relu' , kernel_initializer = 'he_uniform' , input_shape = ( 28 , 28 , 1 ) ) ) model . add ( MaxPooling2D ( ( ii , two ) ) ) model . add together ( Flatten ( ) ) model . add together ( Dumbo ( 100 , activation = 'relu' , kernel_initializer = 'he_uniform' ) ) model . add ( Dense ( x , activation = 'softmax' ) ) # compile model opt = SGD ( lr = 0.01 , momentum = 0.9 ) model . compile ( optimizer = opt , loss = 'categorical_crossentropy' , metrics = [ 'accuracy' ] ) return model # evaluate a model using k-fold cross-validation def evaluate_model ( dataX , dataY , n_folds = five ) : scores , histories = list ( ) , list ( ) # prepare cross validation kfold = KFold ( n_folds , shuffle = True , random_state = 1 ) # enumerate splits for train_ix , test_ix in kfold . divide ( dataX ) : # define model model = define_model ( ) # select rows for train and test trainX , trainY , testX , testY = dataX [ train_ix ] , dataY [ train_ix ] , dataX [ test_ix ] , dataY [ test_ix ] # fit model history = model . fit ( trainX , trainY , epochs = 10 , batch_size = 32 , validation_data = ( testX , testY ) , verbose = 0 ) # evaluate model _ , acc = model . evaluate ( testX , testY , verbose = 0 ) print ( '> %.3f' % ( acc * 100.0 ) ) # suspend scores scores . append ( acc ) histories . append ( history ) render scores , histories # plot diagnostic learning curves def summarize_diagnostics ( histories ) : for i in range ( len ( histories ) ) : # plot loss pyplot . subplot ( 211 ) pyplot . title ( 'Cantankerous Entropy Loss' ) pyplot . plot ( histories [ i ] . history [ 'loss' ] , color = 'blue' , label = 'train' ) pyplot . plot ( histories [ i ] . history [ 'val_loss' ] , color = 'orangish' , characterization = 'test' ) # plot accuracy pyplot . subplot ( 212 ) pyplot . title ( 'Classification Accurateness' ) pyplot . plot ( histories [ i ] . history [ 'accuracy' ] , color = 'blue' , characterization = 'railroad train' ) pyplot . plot ( histories [ i ] . history [ 'val_accuracy' ] , color = 'orangish' , label = 'test' ) pyplot . testify ( ) # summarize model performance def summarize_performance ( scores ) : # print summary print ( 'Accuracy: hateful=%.3f std=%.3f, n=%d' % ( hateful ( scores ) * 100 , std ( scores ) * 100 , len ( scores ) ) ) # box and whisker plots of results pyplot . boxplot ( scores ) pyplot . show ( ) # run the test harness for evaluating a model def run_test_harness ( ) : # load dataset trainX , trainY , testX , testY = load_dataset ( ) # set up pixel information trainX , testX = prep_pixels ( trainX , testX ) # evaluate model scores , histories = evaluate_model ( trainX , trainY ) # learning curves summarize_diagnostics ( histories ) # summarize estimated performance summarize_performance ( scores ) # entry betoken, run the examination harness run_test_harness ( ) |

Running the instance prints the classification accurateness for each fold of the cantankerous-validation process. This is helpful to get an idea that the model evaluation is progressing.

Note: Your results may vary given the stochastic nature of the algorithm or evaluation procedure, or differences in numerical precision. Consider running the case a few times and compare the average consequence.

We can meet that for each fold, the baseline model achieved an error charge per unit below x%, and in two cases 98% and 99% accuracy. These are good results.

| > 91.200 > 91.217 > 90.958 > 91.242 > 91.317 |

Next, a diagnostic plot is shown, giving insight into the learning behavior of the model across each fold.

In this example, we can see that the model generally achieves a good fit, with train and test learning curves converging. There may be some signs of slight overfitting.

Loss-and-Accuracy-Learning-Curves-for-the-Baseline-Model-on-the-Fashion-MNIST-Dataset-During-k-Fold-Cross-Validation

Next, the summary of the model performance is calculated. We can encounter in this case, the model has an estimated skill of about 96%, which is impressive.

| Accuracy: mean=91.187 std=0.121, n=5 |

Finally, a box and whisker plot is created to summarize the distribution of accurateness scores.

Box-and-Whisker-Plot-of-Accuracy-Scores-for-the-Baseline-Model-on-the-Fashion-MNIST-Dataset-Evaluated-Using-one thousand-Fold-Cantankerous-Validation

As we would await, the distribution spread across the low-nineties.

We now have a robust examination harness and a well-performing baseline model.

How to Develop an Improved Model

There are many means that nosotros might explore improvements to the baseline model.

We will look at areas that often result in an improvement, so-called depression-hanging fruit. The first will be a alter to the convolutional operation to add padding and the second will build on this to increment the number of filters.

Padding Convolutions

Adding padding to the convolutional operation can often result in ameliorate model operation, as more of the input image of feature maps are given an opportunity to participate or contribute to the output

By default, the convolutional operation uses 'valid' padding, which means that convolutions are only applied where possible. This can exist changed to 'aforementioned' padding then that zero values are added around the input such that the output has the same size every bit the input.

| . . . model . add ( Conv2D ( 32 , ( 3 , iii ) , padding = 'same' , activation = 'relu' , kernel_initializer = 'he_uniform' , input_shape = ( 28 , 28 , 1 ) ) ) |

The full code listing including the change to padding is provided below for completeness.

| ane 2 iii 4 5 half-dozen 7 8 9 x 11 12 13 14 15 16 17 eighteen 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 forty 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 threescore 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 eighty 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 | # model with padded convolutions for the fashion mnist dataset from numpy import mean from numpy import std from matplotlib import pyplot from sklearn . model_selection import KFold from keras . datasets import fashion_mnist from keras . utils import to_categorical from keras . models import Sequential from keras . layers import Conv2D from keras . layers import MaxPooling2D from keras . layers import Dumbo from keras . layers import Flatten from keras . optimizers import SGD # load railroad train and test dataset def load_dataset ( ) : # load dataset ( trainX , trainY ) , ( testX , testY ) = fashion_mnist . load_data ( ) # reshape dataset to have a unmarried aqueduct trainX = trainX . reshape ( ( trainX . shape [ 0 ] , 28 , 28 , i ) ) testX = testX . reshape ( ( testX . shape [ 0 ] , 28 , 28 , i ) ) # 1 hot encode target values trainY = to_categorical ( trainY ) testY = to_categorical ( testY ) return trainX , trainY , testX , testY # scale pixels def prep_pixels ( train , test ) : # convert from integers to floats train_norm = train . astype ( 'float32' ) test_norm = test . astype ( 'float32' ) # normalize to range 0-i train_norm = train_norm / 255.0 test_norm = test_norm / 255.0 # return normalized images return train_norm , test _norm # define cnn model def define_model ( ) : model = Sequential ( ) model . add together ( Conv2D ( 32 , ( 3 , 3 ) , padding = 'same' , activation = 'relu' , kernel_initializer = 'he_uniform' , input_shape = ( 28 , 28 , 1 ) ) ) model . add ( MaxPooling2D ( ( 2 , 2 ) ) ) model . add ( Flatten ( ) ) model . add together ( Dense ( 100 , activation = 'relu' , kernel_initializer = 'he_uniform' ) ) model . add ( Dense ( 10 , activation = 'softmax' ) ) # compile model opt = SGD ( lr = 0.01 , momentum = 0.9 ) model . compile ( optimizer = opt , loss = 'categorical_crossentropy' , metrics = [ 'accuracy' ] ) render model # evaluate a model using k-fold cantankerous-validation def evaluate_model ( dataX , dataY , n_folds = 5 ) : scores , histories = listing ( ) , listing ( ) # ready cross validation kfold = KFold ( n_folds , shuffle = True , random_state = 1 ) # enumerate splits for train_ix , test_ix in kfold . carve up ( dataX ) : # define model model = define_model ( ) # select rows for train and test trainX , trainY , testX , testY = dataX [ train_ix ] , dataY [ train_ix ] , dataX [ test_ix ] , dataY [ test_ix ] # fit model history = model . fit ( trainX , trainY , epochs = 10 , batch_size = 32 , validation_data = ( testX , testY ) , verbose = 0 ) # evaluate model _ , acc = model . evaluate ( testX , testY , verbose = 0 ) print ( '> %.3f' % ( acc * 100.0 ) ) # append scores scores . append ( acc ) histories . suspend ( history ) return scores , histories # plot diagnostic learning curves def summarize_diagnostics ( histories ) : for i in range ( len ( histories ) ) : # plot loss pyplot . subplot ( 211 ) pyplot . championship ( 'Cantankerous Entropy Loss' ) pyplot . plot ( histories [ i ] . history [ 'loss' ] , color = 'blue' , label = 'railroad train' ) pyplot . plot ( histories [ i ] . history [ 'val_loss' ] , color = 'orange' , label = 'test' ) # plot accuracy pyplot . subplot ( 212 ) pyplot . title ( 'Nomenclature Accuracy' ) pyplot . plot ( histories [ i ] . history [ 'accuracy' ] , color = 'blue' , characterization = 'train' ) pyplot . plot ( histories [ i ] . history [ 'val_accuracy' ] , color = 'orange' , label = 'test' ) pyplot . show ( ) # summarize model performance def summarize_performance ( scores ) : # print summary print ( 'Accuracy: mean=%.3f std=%.3f, n=%d' % ( hateful ( scores ) * 100 , std ( scores ) * 100 , len ( scores ) ) ) # box and whisker plots of results pyplot . boxplot ( scores ) pyplot . bear witness ( ) # run the exam harness for evaluating a model def run_test_harness ( ) : # load dataset trainX , trainY , testX , testY = load_dataset ( ) # prepare pixel data trainX , testX = prep_pixels ( trainX , testX ) # evaluate model scores , histories = evaluate_model ( trainX , trainY ) # learning curves summarize_diagnostics ( histories ) # summarize estimated operation summarize_performance ( scores ) # entry betoken, run the test harness run_test_harness ( ) |

Running the example once more reports model performance for each fold of the cross-validation process.

Note: Your results may vary given the stochastic nature of the algorithm or evaluation procedure, or differences in numerical precision. Consider running the example a few times and compare the boilerplate event.

We tin can see maybe a small improvement in model performance every bit compared to the baseline beyond the cross-validation folds.

| > 90.875 > 91.442 > 91.242 > 91.275 > 91.450 |

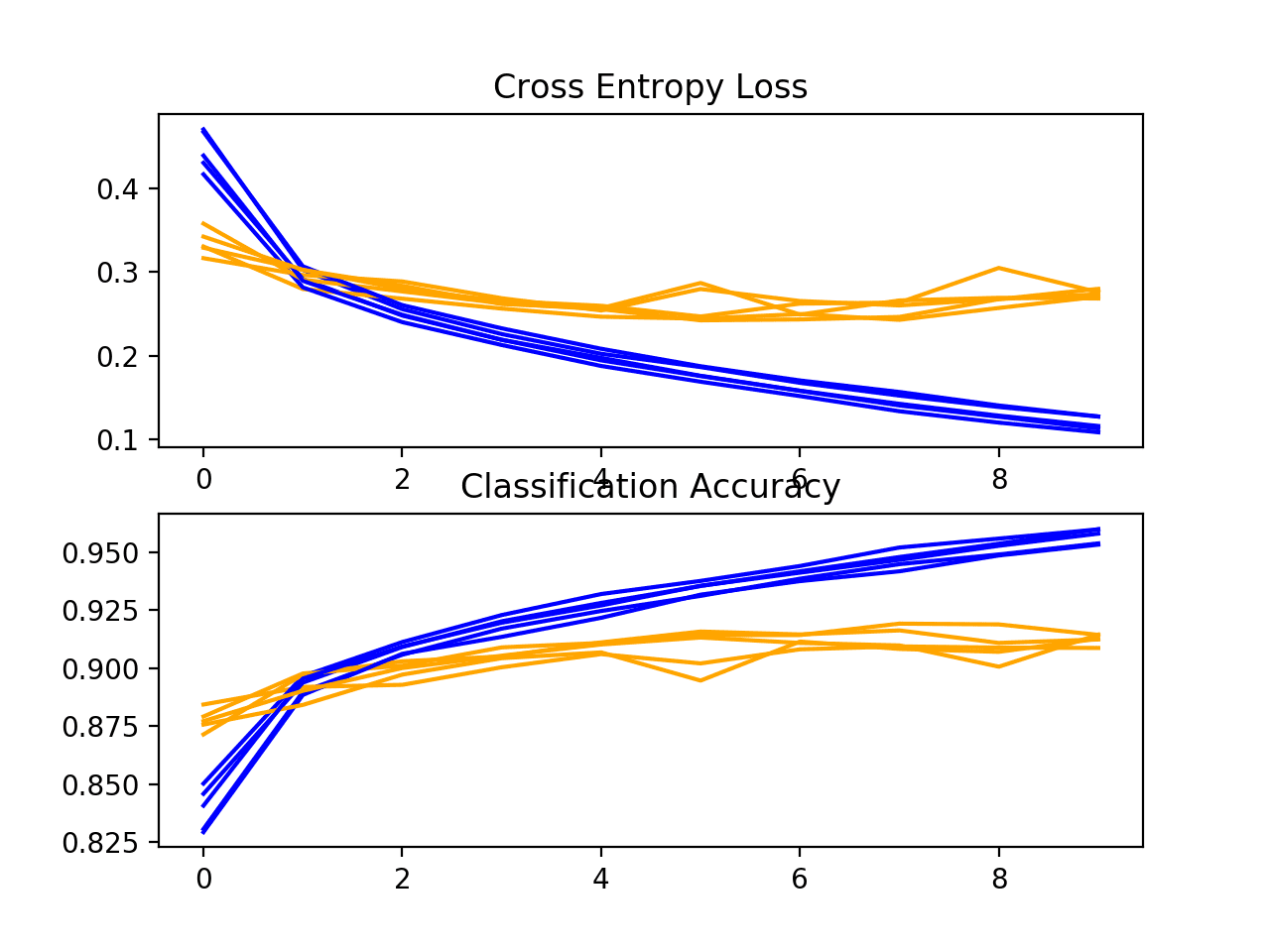

A plot of the learning curves is created. As with the baseline model, we may see some slight overfitting. This could be addressed mayhap with the use of regularization or the training for fewer epochs.

Loss-and-Accuracy-Learning-Curves-for-the-Same-Padding-on-the-Manner-MNIST-Dataset-During-k-Fold-Cross-Validation

Adjacent, the estimated performance of the model is presented, showing performance with a very slight decrease in the hateful accuracy of the model, 91.257% as compared to 91.187% with the baseline model.

This may or may non be a real effect as information technology is inside the bounds of the standard divergence. Perhaps more repeats of the experiment could tease out this fact.

| Accuracy: hateful=91.257 std=0.209, n=5 |

Box-and-Whisker-Plot-of-Accuracy-Scores-for-Aforementioned-Padding-on-the-Fashion-MNIST-Dataset-Evaluated-Using-one thousand-Fold-Cantankerous-Validation

Increasing Filters

An increment in the number of filters used in the convolutional layer can often improve performance, as information technology can provide more opportunity for extracting simple features from the input images.

This is especially relevant when very modest filters are used, such every bit 3×iii pixels.

In this change, we tin increase the number of filters in the convolutional layer from 32 to double that at 64. We will too build upon the possible improvement offered past using 'same' padding.

| . . . model . add together ( Conv2D ( 64 , ( 3 , 3 ) , padding = 'same' , activation = 'relu' , kernel_initializer = 'he_uniform' , input_shape = ( 28 , 28 , 1 ) ) ) |

The full code list including the modify to padding is provided beneath for completeness.

| one 2 three 4 5 half-dozen vii viii 9 10 11 12 13 fourteen 15 xvi 17 18 19 20 21 22 23 24 25 26 27 28 29 thirty 31 32 33 34 35 36 37 38 39 xl 41 42 43 44 45 46 47 48 49 fifty 51 52 53 54 55 56 57 58 59 sixty 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 lxxx 81 82 83 84 85 86 87 88 89 xc 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 | # model with double the filters for the style mnist dataset from numpy import mean from numpy import std from matplotlib import pyplot from sklearn . model_selection import KFold from keras . datasets import fashion_mnist from keras . utils import to_categorical from keras . models import Sequential from keras . layers import Conv2D from keras . layers import MaxPooling2D from keras . layers import Dumbo from keras . layers import Flatten from keras . optimizers import SGD # load train and test dataset def load_dataset ( ) : # load dataset ( trainX , trainY ) , ( testX , testY ) = fashion_mnist . load_data ( ) # reshape dataset to have a single aqueduct trainX = trainX . reshape ( ( trainX . shape [ 0 ] , 28 , 28 , 1 ) ) testX = testX . reshape ( ( testX . shape [ 0 ] , 28 , 28 , 1 ) ) # one hot encode target values trainY = to_categorical ( trainY ) testY = to_categorical ( testY ) return trainX , trainY , testX , testY # scale pixels def prep_pixels ( train , test ) : # convert from integers to floats train_norm = train . astype ( 'float32' ) test_norm = test . astype ( 'float32' ) # normalize to range 0-1 train_norm = train_norm / 255.0 test_norm = test_norm / 255.0 # return normalized images return train_norm , test _norm # define cnn model def define_model ( ) : model = Sequential ( ) model . add together ( Conv2D ( 64 , ( 3 , iii ) , padding = 'same' , activation = 'relu' , kernel_initializer = 'he_uniform' , input_shape = ( 28 , 28 , ane ) ) ) model . add ( MaxPooling2D ( ( 2 , ii ) ) ) model . add ( Flatten ( ) ) model . add ( Dumbo ( 100 , activation = 'relu' , kernel_initializer = 'he_uniform' ) ) model . add ( Dumbo ( 10 , activation = 'softmax' ) ) # compile model opt = SGD ( lr = 0.01 , momentum = 0.9 ) model . compile ( optimizer = opt , loss = 'categorical_crossentropy' , metrics = [ 'accuracy' ] ) return model # evaluate a model using thousand-fold cross-validation def evaluate_model ( dataX , dataY , n_folds = 5 ) : scores , histories = list ( ) , list ( ) # set cross validation kfold = KFold ( n_folds , shuffle = True , random_state = 1 ) # enumerate splits for train_ix , test_ix in kfold . split ( dataX ) : # define model model = define_model ( ) # select rows for train and test trainX , trainY , testX , testY = dataX [ train_ix ] , dataY [ train_ix ] , dataX [ test_ix ] , dataY [ test_ix ] # fit model history = model . fit ( trainX , trainY , epochs = 10 , batch_size = 32 , validation_data = ( testX , testY ) , verbose = 0 ) # evaluate model _ , acc = model . evaluate ( testX , testY , verbose = 0 ) print ( '> %.3f' % ( acc * 100.0 ) ) # append scores scores . append ( acc ) histories . suspend ( history ) return scores , histories # plot diagnostic learning curves def summarize_diagnostics ( histories ) : for i in range ( len ( histories ) ) : # plot loss pyplot . subplot ( 211 ) pyplot . title ( 'Cross Entropy Loss' ) pyplot . plot ( histories [ i ] . history [ 'loss' ] , color = 'blue' , characterization = 'train' ) pyplot . plot ( histories [ i ] . history [ 'val_loss' ] , color = 'orangish' , label = 'test' ) # plot accuracy pyplot . subplot ( 212 ) pyplot . title ( 'Nomenclature Accuracy' ) pyplot . plot ( histories [ i ] . history [ 'accurateness' ] , color = 'blue' , characterization = 'train' ) pyplot . plot ( histories [ i ] . history [ 'val_accuracy' ] , color = 'orange' , characterization = 'exam' ) pyplot . bear witness ( ) # summarize model performance def summarize_performance ( scores ) : # print summary print ( 'Accurateness: mean=%.3f std=%.3f, n=%d' % ( mean ( scores ) * 100 , std ( scores ) * 100 , len ( scores ) ) ) # box and whisker plots of results pyplot . boxplot ( scores ) pyplot . show ( ) # run the test harness for evaluating a model def run_test_harness ( ) : # load dataset trainX , trainY , testX , testY = load_dataset ( ) # prepare pixel data trainX , testX = prep_pixels ( trainX , testX ) # evaluate model scores , histories = evaluate_model ( trainX , trainY ) # learning curves summarize_diagnostics ( histories ) # summarize estimated operation summarize_performance ( scores ) # entry point, run the test harness run_test_harness ( ) |

Running the example reports model performance for each fold of the cross-validation process.

Notation: Your results may vary given the stochastic nature of the algorithm or evaluation procedure, or differences in numerical precision. Consider running the instance a few times and compare the average issue.

The per-fold scores may propose some further improvement over the baseline and using aforementioned padding alone.

| > ninety.917 > 90.908 > 90.175 > 91.158 > 91.408 |

A plot of the learning curves is created, in this case showing that the models notwithstanding have a reasonable fit on the problem, with a small sign of some of the runs overfitting.

Loss-and-Accurateness-Learning-Curves-for-the-More than-Filters-and-Padding-on-the-Style-MNIST-Dataset-During-grand-Fold-Cross-Validation

Adjacent, the estimated performance of the model is presented, showing a small improvement in functioning as compared to the baseline from 90.913% to 91.257%.

Again, the change is still inside the bounds of the standard deviation, and it is not articulate whether the effect is real.

| Accuracy: mean=90.913 std=0.412, n=five |

How to Finalize the Model and Make Predictions

The process of model comeback may go on for as long as we have ideas and the time and resources to test them out.

At some point, a final model configuration must be chosen and adopted. In this case, nosotros will go on things unproblematic and utilize the baseline model as the concluding model.

Outset, we volition finalize our model, but fitting a model on the entire grooming dataset and saving the model to file for later employ. We will then load the model and evaluate its performance on the hold out test dataset, to get an idea of how well the chosen model actually performs in practice. Finally, we will use the saved model to make a prediction on a single image.

Save Final Model

A concluding model is typically fit on all available data, such as the combination of all train and examination dataset.

In this tutorial, we are intentionally holding back a examination dataset and then that nosotros can estimate the performance of the last model, which can be a good idea in practice. As such, we will fit our model on the preparation dataset only.

| # fit model model . fit ( trainX , trainY , epochs = 10 , batch_size = 32 , verbose = 0 ) |

Once fit, we can save the final model to an h5 file by calling the save() role on the model and pass in the called filename.

| # save model model . salve ( 'final_model.h5' ) |

Annotation: saving and loading a Keras model requires that the h5py library is installed on your workstation.

The complete example of plumbing equipment the terminal model on the training dataset and saving it to file is listed beneath.

| 1 2 three 4 v vi 7 8 9 x 11 12 13 14 15 16 17 18 xix twenty 21 22 23 24 25 26 27 28 29 xxx 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 threescore 61 | # save the final model to file from keras . datasets import fashion_mnist from keras . utils import to_categorical from keras . models import Sequential from keras . layers import Conv2D from keras . layers import MaxPooling2D from keras . layers import Dense from keras . layers import Flatten from keras . optimizers import SGD # load train and exam dataset def load_dataset ( ) : # load dataset ( trainX , trainY ) , ( testX , testY ) = fashion_mnist . load_data ( ) # reshape dataset to have a single channel trainX = trainX . reshape ( ( trainX . shape [ 0 ] , 28 , 28 , 1 ) ) testX = testX . reshape ( ( testX . shape [ 0 ] , 28 , 28 , 1 ) ) # ane hot encode target values trainY = to_categorical ( trainY ) testY = to_categorical ( testY ) return trainX , trainY , testX , testY # scale pixels def prep_pixels ( train , test ) : # catechumen from integers to floats train_norm = train . astype ( 'float32' ) test_norm = test . astype ( 'float32' ) # normalize to range 0-1 train_norm = train_norm / 255.0 test_norm = test_norm / 255.0 # return normalized images return train_norm , test _norm # define cnn model def define_model ( ) : model = Sequential ( ) model . add ( Conv2D ( 32 , ( three , 3 ) , activation = 'relu' , kernel_initializer = 'he_uniform' , input_shape = ( 28 , 28 , 1 ) ) ) model . add ( MaxPooling2D ( ( 2 , ii ) ) ) model . add ( Flatten ( ) ) model . add together ( Dense ( 100 , activation = 'relu' , kernel_initializer = 'he_uniform' ) ) model . add ( Dense ( ten , activation = 'softmax' ) ) # compile model opt = SGD ( lr = 0.01 , momentum = 0.ix ) model . compile ( optimizer = opt , loss = 'categorical_crossentropy' , metrics = [ 'accuracy' ] ) render model # run the test harness for evaluating a model def run_test_harness ( ) : # load dataset trainX , trainY , testX , testY = load_dataset ( ) # ready pixel information trainX , testX = prep_pixels ( trainX , testX ) # define model model = define_model ( ) # fit model model . fit ( trainX , trainY , epochs = 10 , batch_size = 32 , verbose = 0 ) # relieve model model . save ( 'final_model.h5' ) # entry point, run the test harness run_test_harness ( ) |

After running this example, you volition now take a 1.two-megabyte file with the proper noun 'final_model.h5' in your current working directory.

Evaluate Final Model

We can now load the final model and evaluate it on the agree out examination dataset.

This is something we might do if nosotros were interested in presenting the functioning of the chosen model to project stakeholders.

The model can exist loaded via the load_model() role.

The complete example of loading the saved model and evaluating it on the test dataset is listed below.

| ane 2 3 4 5 6 seven eight nine ten xi 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 xl 41 42 | # evaluate the deep model on the test dataset from keras . datasets import fashion_mnist from keras . models import load_model from keras . utils import to _chiselled # load train and test dataset def load_dataset ( ) : # load dataset ( trainX , trainY ) , ( testX , testY ) = fashion_mnist . load_data ( ) # reshape dataset to have a single channel trainX = trainX . reshape ( ( trainX . shape [ 0 ] , 28 , 28 , 1 ) ) testX = testX . reshape ( ( testX . shape [ 0 ] , 28 , 28 , 1 ) ) # ane hot encode target values trainY = to_categorical ( trainY ) testY = to_categorical ( testY ) render trainX , trainY , testX , testY # scale pixels def prep_pixels ( railroad train , examination ) : # catechumen from integers to floats train_norm = railroad train . astype ( 'float32' ) test_norm = test . astype ( 'float32' ) # normalize to range 0-1 train_norm = train_norm / 255.0 test_norm = test_norm / 255.0 # return normalized images return train_norm , test _norm # run the examination harness for evaluating a model def run_test_harness ( ) : # load dataset trainX , trainY , testX , testY = load_dataset ( ) # prepare pixel data trainX , testX = prep_pixels ( trainX , testX ) # load model model = load_model ( 'final_model.h5' ) # evaluate model on test dataset _ , acc = model . evaluate ( testX , testY , verbose = 0 ) impress ( '> %.3f' % ( acc * 100.0 ) ) # entry bespeak, run the examination harness run_test_harness ( ) |

Running the example loads the saved model and evaluates the model on the concur out examination dataset.

The nomenclature accuracy for the model on the examination dataset is calculated and printed.

Note: Your results may vary given the stochastic nature of the algorithm or evaluation procedure, or differences in numerical precision. Consider running the instance a few times and compare the boilerplate outcome.

In this case, we can come across that the model achieved an accurateness of 90.990%, or only less than 10% nomenclature mistake, which is not bad.

Make Prediction

We can apply our saved model to brand a prediction on new images.

The model assumes that new images are grayscale, they have been segmented so that i image contains one centered slice of clothing on a black background, and that the size of the paradigm is square with the size 28×28 pixels.

Beneath is an image extracted from the MNIST exam dataset. You can save information technology in your current working directory with the filename 'sample_image.png'.

Sample Clothing (Pullover)

- Download Image (sample_image.png)

Nosotros will pretend this is an entirely new and unseen epitome, prepared in the required fashion, and come across how we might apply our saved model to predict the integer that the image represents. For this case, we expect class "ii" for "Pullover" (also called a jumper).

First, we can load the image, strength it to exist grayscale format, and force the size to be 28×28 pixels. The loaded image can then be resized to have a single channel and represent a single sample in a dataset. The load_image() part implements this and will return the loaded epitome ready for classification.

Chiefly, the pixel values are prepared in the aforementioned way as the pixel values were prepared for the training dataset when fitting the final model, in this case, normalized.

| # load and set up the image def load_image ( filename ) : # load the paradigm img = load_img ( filename , grayscale = True , target_size = ( 28 , 28 ) ) # catechumen to array img = img_to_array ( img ) # reshape into a single sample with 1 channel img = img . reshape ( 1 , 28 , 28 , 1 ) # prepare pixel data img = img . astype ( 'float32' ) img = img / 255.0 return img |

Next, nosotros tin load the model as in the previous section and call the predict_classes() function to predict the vesture in the epitome.

| # predict the class result = model . predict_classes ( img ) |

The complete case is listed beneath.

| 1 2 three 4 v 6 7 8 9 ten 11 12 13 14 15 16 17 eighteen nineteen 20 21 22 23 24 25 26 27 28 29 30 | # make a prediction for a new paradigm. from keras . preprocessing . image import load_img from keras . preprocessing . image import img_to_array from keras . models import load _model # load and prepare the paradigm def load_image ( filename ) : # load the image img = load_img ( filename , grayscale = Truthful , target_size = ( 28 , 28 ) ) # convert to assortment img = img_to_array ( img ) # reshape into a single sample with 1 channel img = img . reshape ( 1 , 28 , 28 , i ) # set pixel data img = img . astype ( 'float32' ) img = img / 255.0 return img # load an image and predict the grade def run_example ( ) : # load the image img = load_image ( 'sample_image.png' ) # load model model = load_model ( 'final_model.h5' ) # predict the class consequence = model . predict_classes ( img ) print ( issue [ 0 ] ) # entry betoken, run the example run_example ( ) |

Running the instance first loads and prepares the image, loads the model, then correctly predicts that the loaded image represents a pullover or class 'ii'.

Extensions

This department lists some ideas for extending the tutorial that yous may wish to explore.

- Regularization. Explore how adding regularization impacts model performance every bit compared to the baseline model, such as weight disuse, early stopping, and dropout.

- Tune the Learning Rate. Explore how different learning rates bear upon the model performance equally compared to the baseline model, such as 0.001 and 0.0001.

- Melody Model Depth. Explore how calculation more than layers to the model touch on the model functioning as compared to the baseline model, such every bit another cake of convolutional and pooling layers or another dense layer in the classifier part of the model.

If you explore whatsoever of these extensions, I'd dear to know.

Mail service your findings in the comments beneath.

Further Reading

This section provides more than resources on the topic if yous are looking to become deeper.

APIs

- Keras Datasets API

- Keras Datasets Code

- sklearn.model_selection.KFold API

Articles

- Fashion-MNIST GitHub Repository

Summary

In this tutorial, yous discovered how to develop a convolutional neural network for clothing classification from scratch.

Specifically, yous learned:

- How to develop a exam harness to develop a robust evaluation of a model and establish a baseline of functioning for a classification task.

- How to explore extensions to a baseline model to improve learning and model capacity.

- How to develop a finalized model, evaluate the performance of the final model, and apply it to make predictions on new images.

Practice you take any questions?

Inquire your questions in the comments below and I will exercise my best to answer.

0 Response to "Fashion Mnist Push Accuracy Higher Than 50 Percent"

Post a Comment